The whole industry is figuring out how to review AI-generated code. The code is better than a person would write, no doubt. But it’s not perfect and any approver owns the production impact of its imperfections.

I think the answer here is both obvious and unsatisfying: We need several different review patterns. There’s no one ideal AI-review-bot; we need that bot plus other bots plus unit tests, production observability, gating releases behind flags, etc.

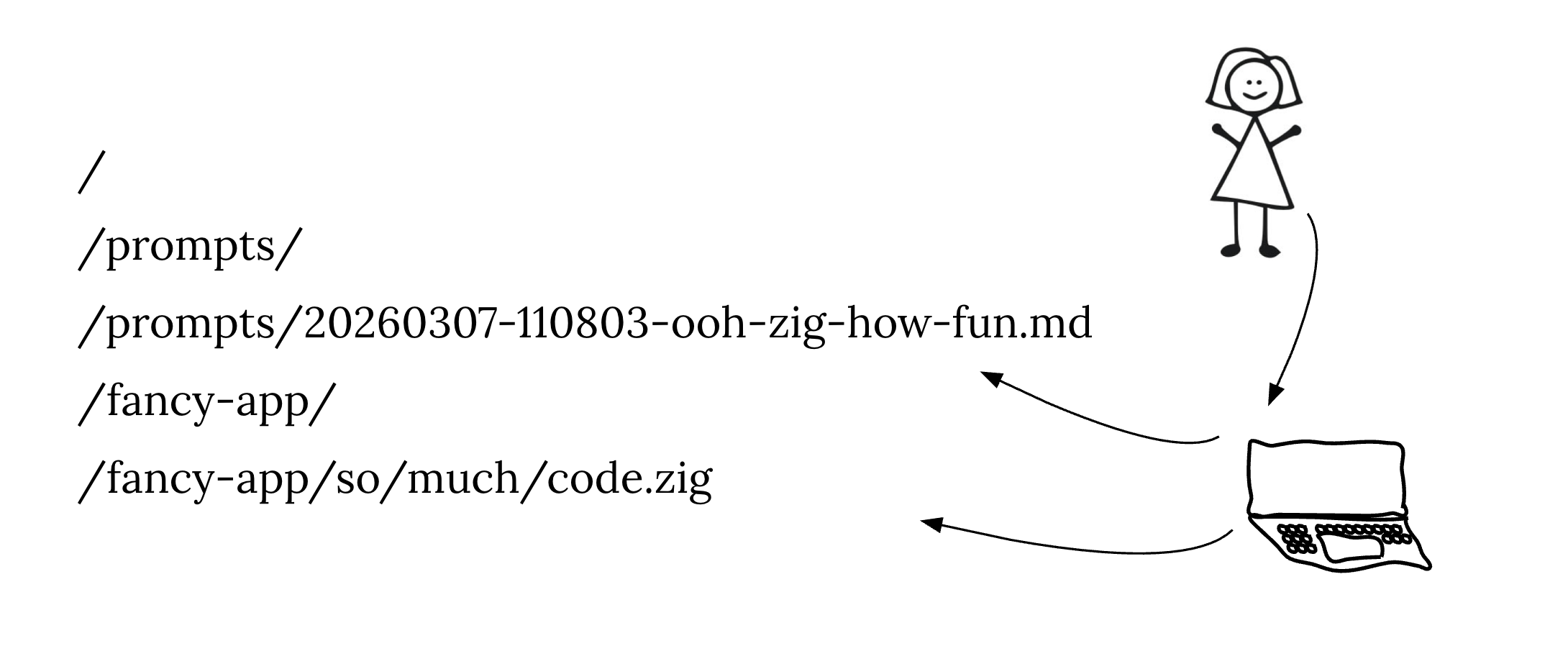

One pattern that might help is committing prompts to git. Human review capacity scales with human generated content.

To try this, I made a skill (promptlog.md) to sanitize all my prompts and commit them into ./prompts/ with the code.

It’s helping with an ambitious new project called robotocore that fully replaces LocalStack. You can review exactly how I built it.

I expect I’ll only review the prompts from inbound PRs. I’ll let bots check that the prompt was correctly implemented.

I’m writing this up as a post mostly so you can grab the skill if you want but also to make this point: as LinkedIn fills up with “moar gastown” and people boasting about how many complex agents they’re using, I’ve had incredible results working linearly with one agent, guided thoughtfully by skills that I had it write for itself.

So, in case you’re feeling left behind, the best practices of engineering management seem to apply seamlessly to the work of agentic coding:

- Manage to output, don’t micromanage the process

- Develop an intuition of the capabiliities of the person/agent you’re leading and make the work fit to their strengths

- Inspect and interrogate as necessary, but don’t expect to understand everything until you ask, at which point you have to read a lot

- Celebrate the wins — it turns out that even machines respond better to being told what to do than what not to